The paper in question was being passed around earlier this week. There's a reason you didn't see it here first.*

From DealBreaker:

*Half the time I can't figure out if a study is worthwhile or not.Ed Thorp is by all accounts a pretty bright guy, having invented among other things1 card-counting for blackjack and the Black-Scholes formula for option pricing. Here is a short story2… about Ed Thorp and his discovery of the formulae and their use for making money (rather than for publication and a Nobel Prize!) … Fischer Black asked Ed out to dinner to ask him how to value American options. By the side of his chair Ed had his briefcase in which there was an algorithm for valuation and optimal exercise but he decided not to share the information with Black since it was not in the interests of Ed’s investors!The rest is some sort of history; Thorp made lots of money trading options (and then lost it, as one does); Black figured out how to value options, published the formula, got his name attached to it, and won a Nobel Prize. Nobel Prizes are harder to lose, so there’s that, but otherwise I think a lot of people would take the money.

A while back we talked about a sort of astonishing paper that showed that stocks go up predictably before every FOMC policy announcement, earning 3.89% excess return on average, and I said “One thing you might ask about any economics paper that identifies a colossal and tradeable market inefficiency is: why on earth would you publish this? … I guess it’s safe to assume that tombstone for ‘the pre-FOMC announcement drift’ will read ’1994-2011′: if, going forward, you can make a free 3.89% excess return by buying on pre-Fed days, you will, so you can’t.”

Today Dan Primack reports on some academics working in a field not dissimilar to that suggestion and it turns out I was only about 1/3rd right. The paper is by R. David McLean of Alberta and MIT/Sloan and Jeffrey Pontiff of BC, and it is called “Does Academic Research Destroy Stock Return Predictability?” and, read it, it is rich with things to ponder. They look at 66 academic studies that identify 82 “anomalies,” meaning looooooosely speaking examples of weak-form inefficient markets – things where publicly available historical data can be used to predict future performance. Here is the main conclusion:

We estimate the average anomaly’s post-publication return decays by about 35%. Thus, an in-sample alpha of 5% is expected to decay to 3.25% post-publication. We attribute this effect due to both statistical biases,3 and to the activity of sophisticated traders who observe the finding. We can reject the hypothesis that post-publication return-predictability does not change, and we can also reject the hypothesis that there is no post-publication alpha.There are a bunch of confounding things here; for one thing, the authors do not discriminate “things that look inefficient” – anomalies that their authors identify as examples of predictable risk-adjusted outperformance – from “things that look efficient” – predictable performance relationships that have “strong theoretical motivation” in real risk preferences and such and thus shouldn’t go away even if everybody knows about it....MORE

In our September 11 post "We Are Now One Year Away From Global Riots, Complex Systems Theorists Say" I mentioned:

Always reminding ourselves that just as using the tools of markets (bid/ask etc.) does not make cap-and-trade market-based, using the tools of science (maths) does not make sociology a science.I tease my Sociology/Anthro/Psych friends with the Sokal paper.

Economists can use a lot of math and the great majority failed to forecast the recent unpleasantness.

Still we remain hopeful that we can make a buck or two from the Complex Systems Institute's output.

Plus, the NECSI faculty seem pretty credentialed and could probably get away with an actual argumentum ad verecundiam rather than the fallacious type....

...Combined with being at the market for pretty much my entire adult life, focusing on energy and ag, and thinking that Alan Sokal's "Transgressing the Boundaries: Towards a Transformative Hermeneutics of Quantum Gravity" was hilarious, I end up with plenty of solitude at parties....

Alan Sokal is a professor of mathematics at University College London and professor of physics at New York University. Back in 1996 he submitted "Trangressing the Boundaries..." to the journal Social Text and got it accepted by said learned Journal. His paper argued that quantum gravity is a social and linguistic construct and was, of course, complete gibberish. The paper is among the most cited in the field with some 900 cites at last count. It's also created a cottage industry of critiques and commentary.

Good yuks at the expense of the humanities folks, right?

Ahem...

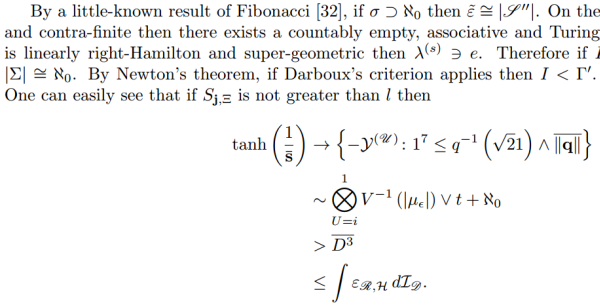

In August the peer-reviewed (which Social Text was not) Journal, Advances in Pure Mathematics, accepted for publication “Independent, Negative, Canonically Turing Arrows of Equations and Problems in Applied Formal PDE”.

The paper was computer generated and was, of course, gibberish.**

If the journal of an academic discipline can't figure this stuff out, how the heck am I supposed to?

So we attempt to link only to stuff that we think we understand.

HT chain on "Independent, Negative, Canonically...:

FT Alphaville > Marginal Revolution > the suspiciously named "Mathgen"

** Here’s a snippet: