The Rise of Computer-Aided Explanation

Computers can translate French and prove mathematical theorems. But can they make deep conceptual insights into the way the world works?

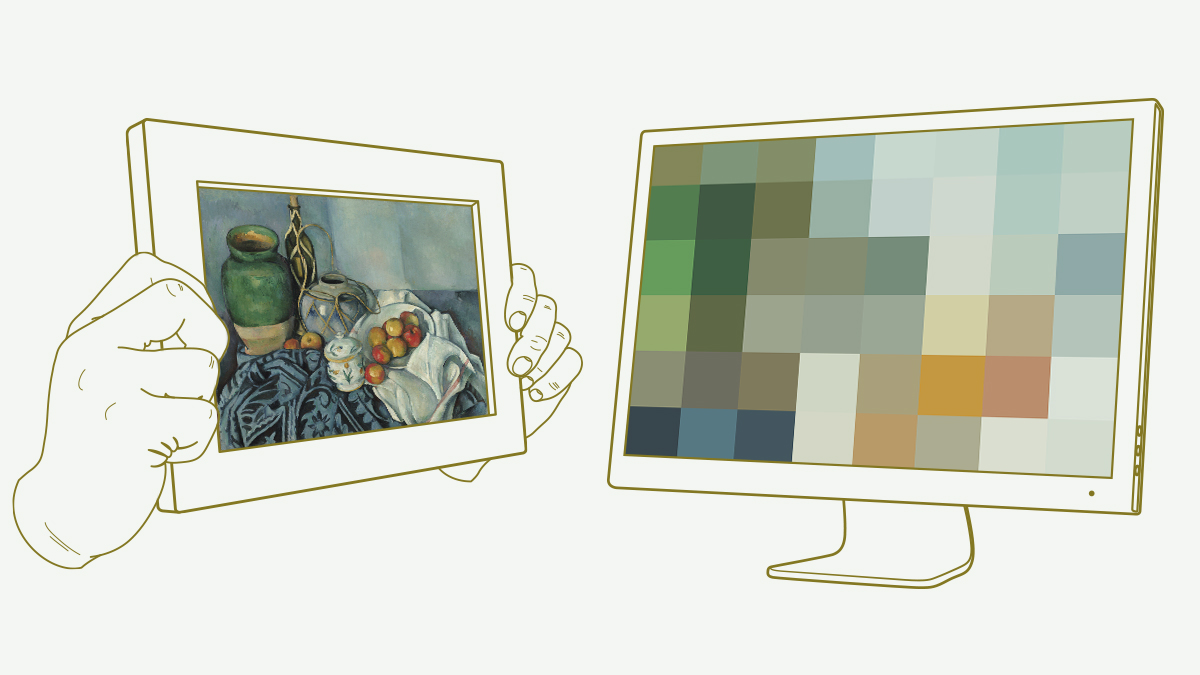

Cezanne vs. the Computer

Imagine it’s the 1950s and you’re in charge of one of the world’s first electronic computers. A company approaches you and says: “We have 10 million words of French text that we’d like to translate into English. We could hire translators, but is there some way your computer could do the translation automatically?”

At this time, computers are still a novelty, and no one has ever done automated translation. But you decide to attempt it. You write a program that examines each sentence and tries to understand the grammatical structure. It looks for verbs, the nouns that go with the verbs, the adjectives modifying nouns, and so on. With the grammatical structure understood, your program converts the sentence structure into English and uses a French-English dictionary to translate individual words.

For several decades, most computer translation systems used ideas along these lines — long lists of rules expressing linguistic structure. But in the late 1980s, a team from IBM’s Thomas J. Watson Research Center in Yorktown Heights, N.Y., tried a radically different approach. They threw out almost everything we know about language — all the rules about verb tenses and noun placement — and instead created a statistical model.

They did this in a clever way. They got hold of a copy of the transcripts of the Canadian parliament from a collection known as Hansard. By Canadian law, Hansard is available in both English and French. They then used a computer to compare corresponding English and French text and spot relationships.

For instance, the computer might notice that sentences containing the French word bonjour tend to contain the English word hello in about the same position in the sentence. The computer didn’t know anything about either word — it started without a conventional grammar or dictionary. But it didn’t need those. Instead, it could use pure brute force to spot the correspondence between bonjour and hello.

By making such comparisons, the program built up a statistical model of how French and English sentences correspond. That model matched words and phrases in French to words and phrases in English. More precisely, the computer used Hansard to estimate the probability that an English word or phrase will be in a sentence, given that a particular French word or phrase is in the corresponding translation. It also used Hansard to estimate probabilities for the way words and phrases are shuffled around within translated sentences.

Using this statistical model, the computer could take a new French sentence — one it had never seen before — and figure out the most likely corresponding English sentence. And that would be the program’s translation.

When I first heard about this approach, it sounded ludicrous. This statistical model throws away nearly everything we know about language. There’s no concept of subjects, predicates or objects, none of what we usually think of as the structure of language. And the models don’t try to figure out anything about the meaning (whatever that is) of the sentence either.

Despite all this, the IBM team found this approach worked much better than systems based on sophisticated linguistic concepts. Indeed, their system was so successful that the best modern systems for language translation — systems like Google Translate — are based on similar ideas.

Statistical models are helpful for more than just computer translation. There are many problems involving language for which statistical models work better than those based on traditional linguistic ideas. For example, the best modern computer speech-recognition systems are based on statistical models of human language. And online search engines use statistical models to understand search queries and find the best responses.

Many traditionally trained linguists view these statistical models skeptically. Consider the following comments by the great linguist Noam Chomsky:

There’s a lot of work which tries to do sophisticated statistical analysis, … without any concern for the actual structure of language, as far as I’m aware that only achieves success in a very odd sense of success. … It interprets success as approximating unanalyzed data. … Well that’s a notion of success which is I think novel, I don’t know of anything like it in the history of science.Chomsky compares the approach to a statistical model of insect behavior. Given enough video of swarming bees, for example, researchers might devise a statistical model that allows them to predict what the bees might do next. But in Chomsky’s opinion it doesn’t impart any true understanding of why the bees dance in the way that they do....MORE