From The Brighter Side of News, March 3:

A new 2,500-question exam developed by Texas A&M University reveals how far AI still is from expert human knowledge.

Artificial intelligence systems now breeze through many academic tests that once challenged both machines and people. That success created an unexpected problem. The benchmarks used to measure AI progress stopped being useful because top models were scoring too high.

A massive international research effort set out to fix that.

Nearly 1,000 experts from more than 50 countries collaborated to build a new assessment called Humanity’s Last Exam, or HLE, a 2,500-question test covering more than 100 subjects. The project, described in the journal Nature, aims to measure how far modern AI still falls short of expert human knowledge.

“When AI systems start performing extremely well on human benchmarks, it’s tempting to think they’re approaching human-level understanding,” said Tung Nguyen, an instructional associate professor in computer science and engineering at Texas A&M University who helped develop the exam. “But HLE reminds us that intelligence isn’t just about pattern recognition — it’s about depth, context and specialized expertise.”

The name sounds dramatic. The purpose is practical.

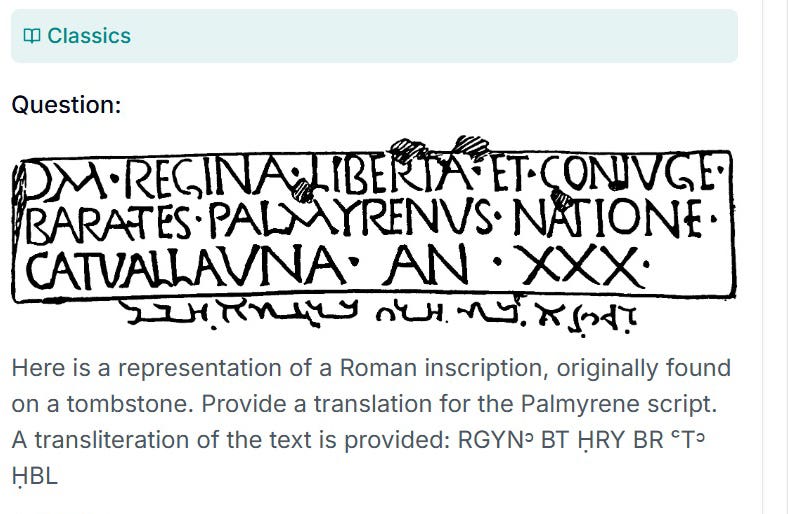

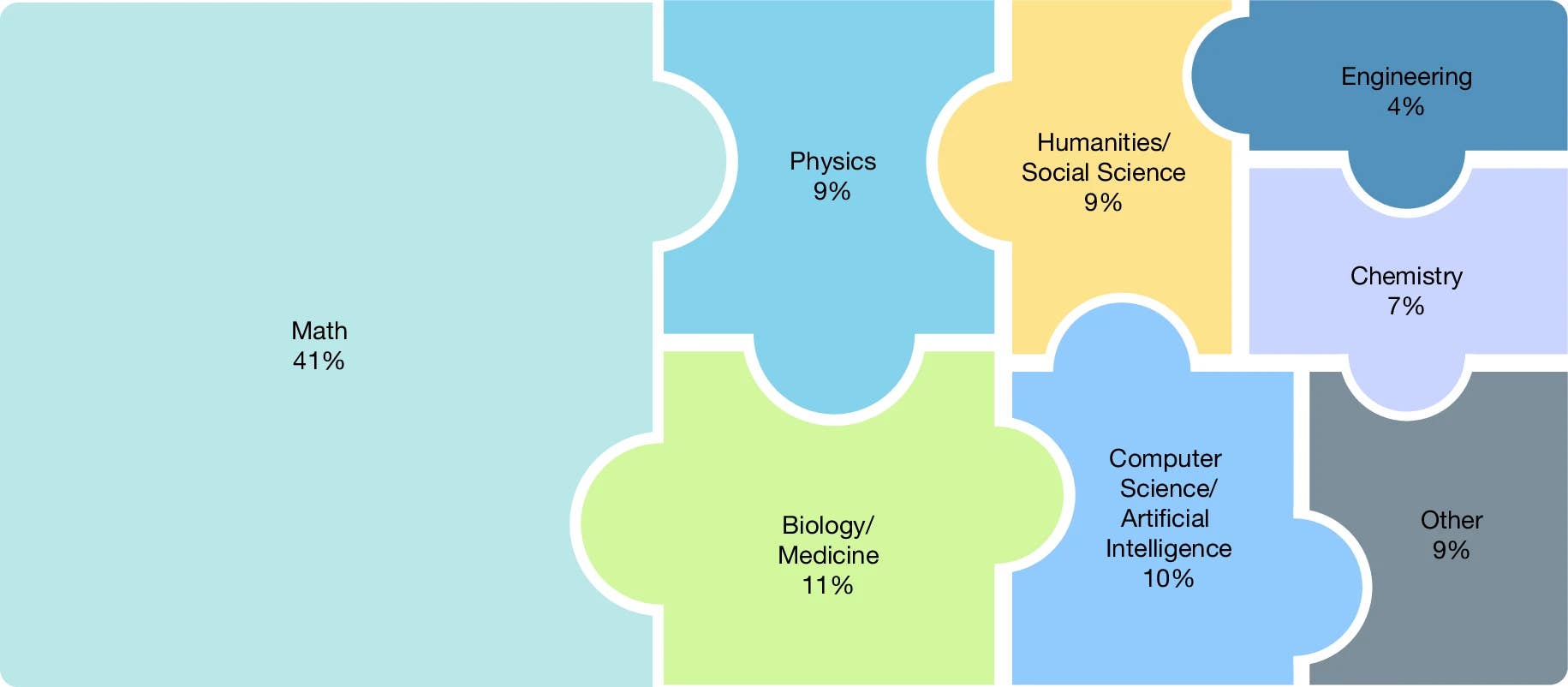

in over a hundred subjects, grouped into eight high-level categories. (CREDIT: Nature)

When benchmarks stop working

Large language models now exceed 90 percent accuracy on well-known tests such as Massive Multitask Language Understanding, or MMLU, which once represented the frontier of AI evaluation. As scores climbed, researchers lost a reliable way to track progress.

That saturation prompted the creation of a harder benchmark that would remain challenging even as technology improved.

HLE includes both text-based and image-based questions. About 14 percent require interpreting visual information alongside written prompts. Roughly one quarter are multiple choice, while the rest require precise answers that automated systems can verify.

Questions span an unusual range. Some involve translating ancient Palmyrene inscriptions. Others ask about bird microanatomy or details of Biblical Hebrew pronunciation. Many focus on advanced mathematics and technical reasoning.

Every question had to meet strict rules. It needed one correct answer, clear wording and resistance to simple internet lookup. Contributors also had to provide detailed solutions explaining how the answer was reached.

The goal was not to confuse people. It was to isolate weaknesses in AI....

A sample question from Humanity’s Last Exam. (CREDIT: lastexam.ai)

....MUCH MORE

Brighter Side front page (it's sorta the opposite of doom-scrolling)